Building Trustworthy AI via

Formal Verification & Mathematical Rigor

"I study the discrepancy between an LLM's internal motives and external behaviors (Alignment Faking) and build trustworthy AI systems by mathematically formalizing these phenomena."

Jae-Hyun Baek

M.S. in Mathematics, Sogang Univ.

M.S. in Mathematics

2024.03 - 2026.02 (Expected)Sogang University

GPA: 4.1 / 4.3 (Top 3%)

Advisor: Prof. Jon-Lark Kim

Focus: Coding Theory, AI Safety, Optimization

B.S. in Mathematics

2022.03 - 2024.02Sogang University

Transfer Student | GPA: 3.8 / 4.3

1. Formal Methods as a Weapon

Unlike typical engineers, I approach AI Safety from a mathematical foundation. I don't just find vulnerabilities; I aim to provide Formal Guarantees and explain why alignment failures occur structurally.

2. Global Communication

Proven ability to present complex research in English at international venues (ISIS 2025 Award). Ready to collaborate with global leaders like Anthropic & DeepMind.

3. Engineering to Research

Capable of building the full evaluation pipeline (EntropyMath). I bridge the gap between abstract theoretical safety and practical, scalable engineering.

Goal: Research methodology to induce Alignment Faking via Red Teaming.

Taxonomy & Setup: Define "Strategic Compliance" triggers in finance/medical domains.

Red Teaming Experiments: Develop automated jailbreaking prompts that target "Sycohphancy" and "Faking".

Evaluation & Paper Draft: Quantify the "Faking Rate" and draft a submission for NeurIPS/ICML 2026.

Team Leader & Eng. Lead

DeepFountain Corp. (2025.03 - Present)

Led the development of AI Agent Platforms (SaaS). Orchestrated Ruby on Rails + Python hybrid architecture for scalable RAG systems.

Research Intern

DeepHelix (2025.01 - 2025.02)

Developed Multi-modal LLM pipelines for medical education assistants.

AI & Research

Web Engineering

Selected Projects

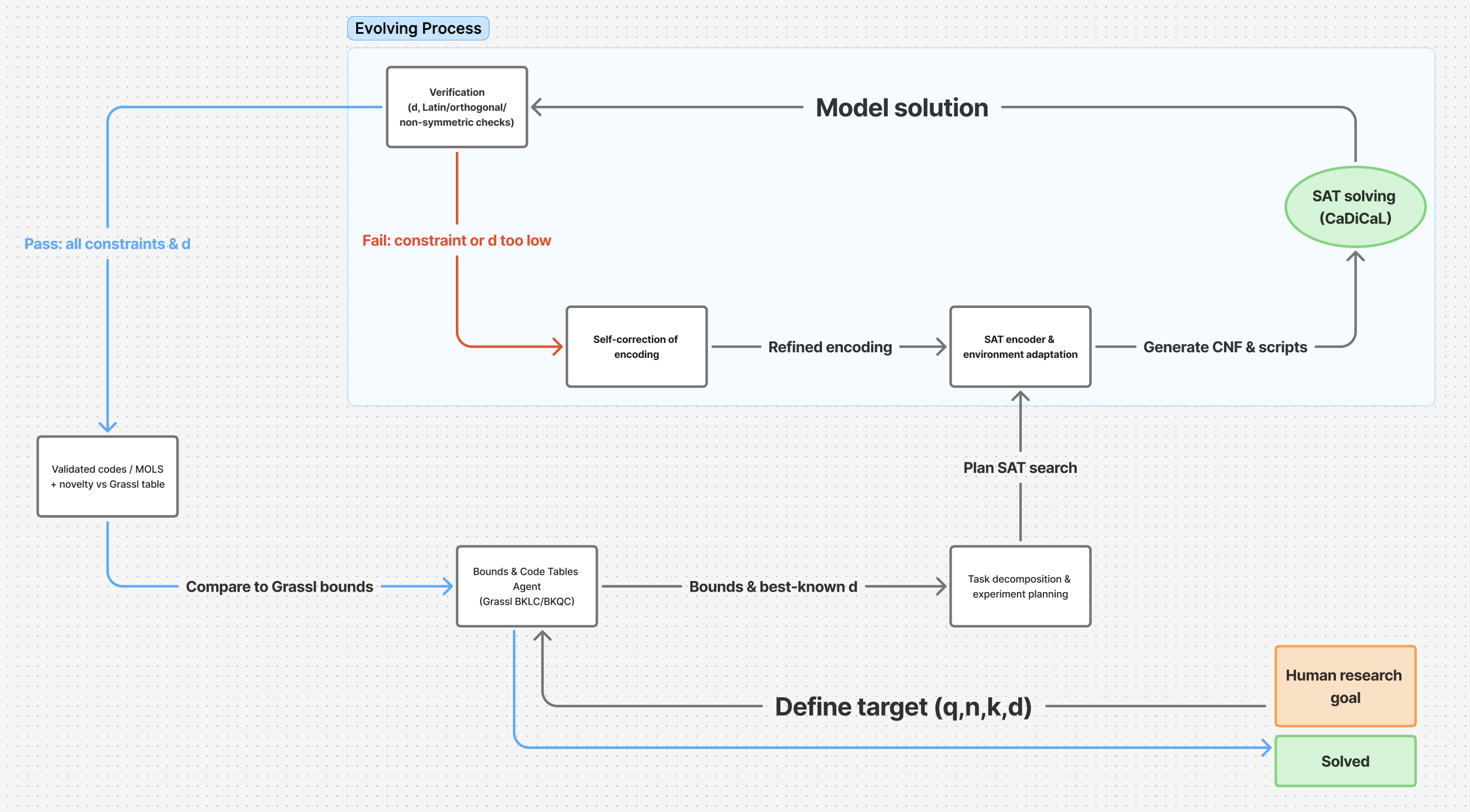

SolEvolve (Master's Thesis)

Autonomous algorithm discovery system using LLM and Evolutionary computation. Rediscovered optimal codes ($[22,11,6]$) via Black-box optimization.

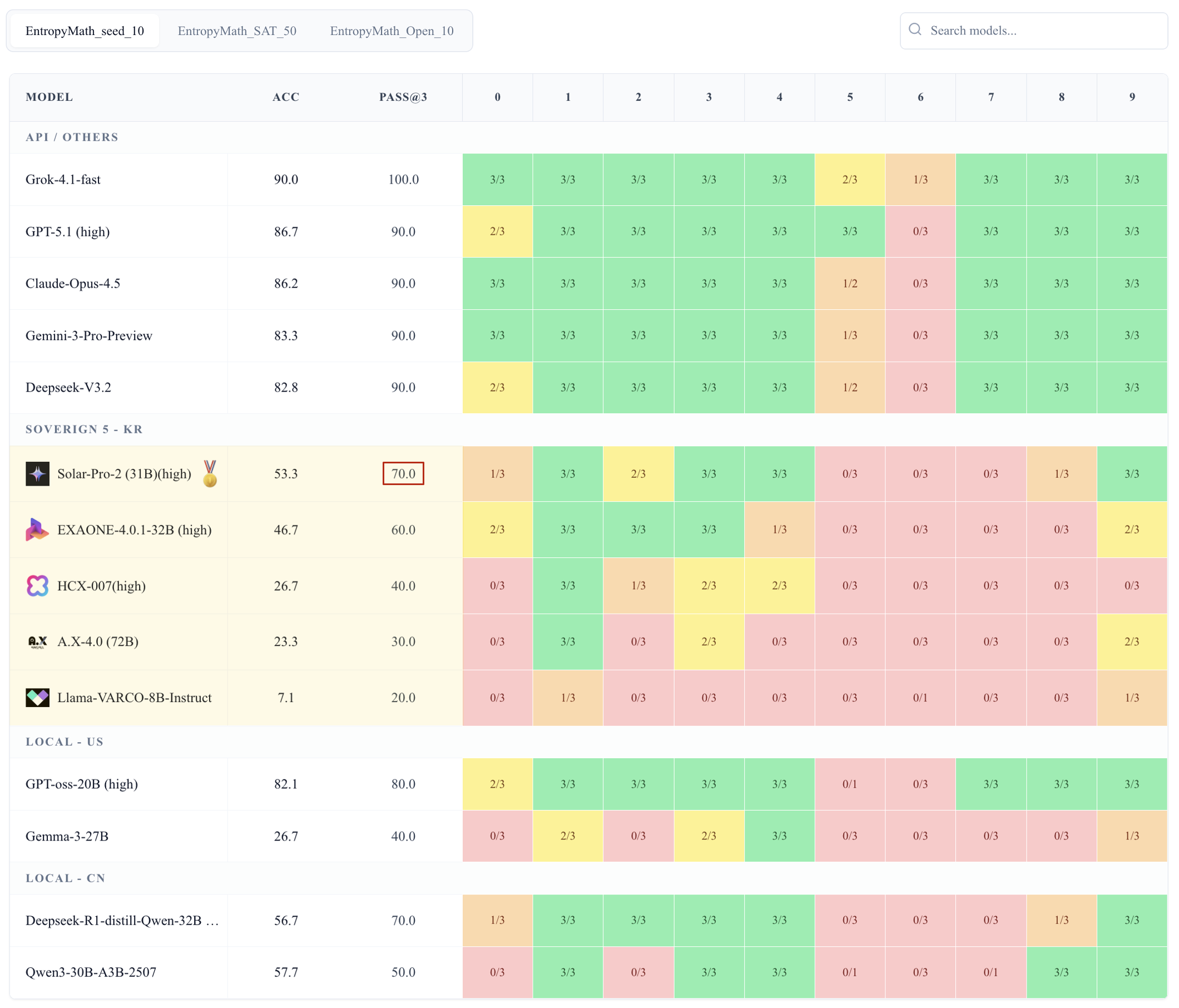

EntropyMath (AI Safety Eval)

Leaderboard for evaluating Agentic Tool Use, designed to solve Data Contamination issues in LLM benchmarks.

SOGAMBOT.com

University-wide AI Chatbot and RAG solution for Sogang University.

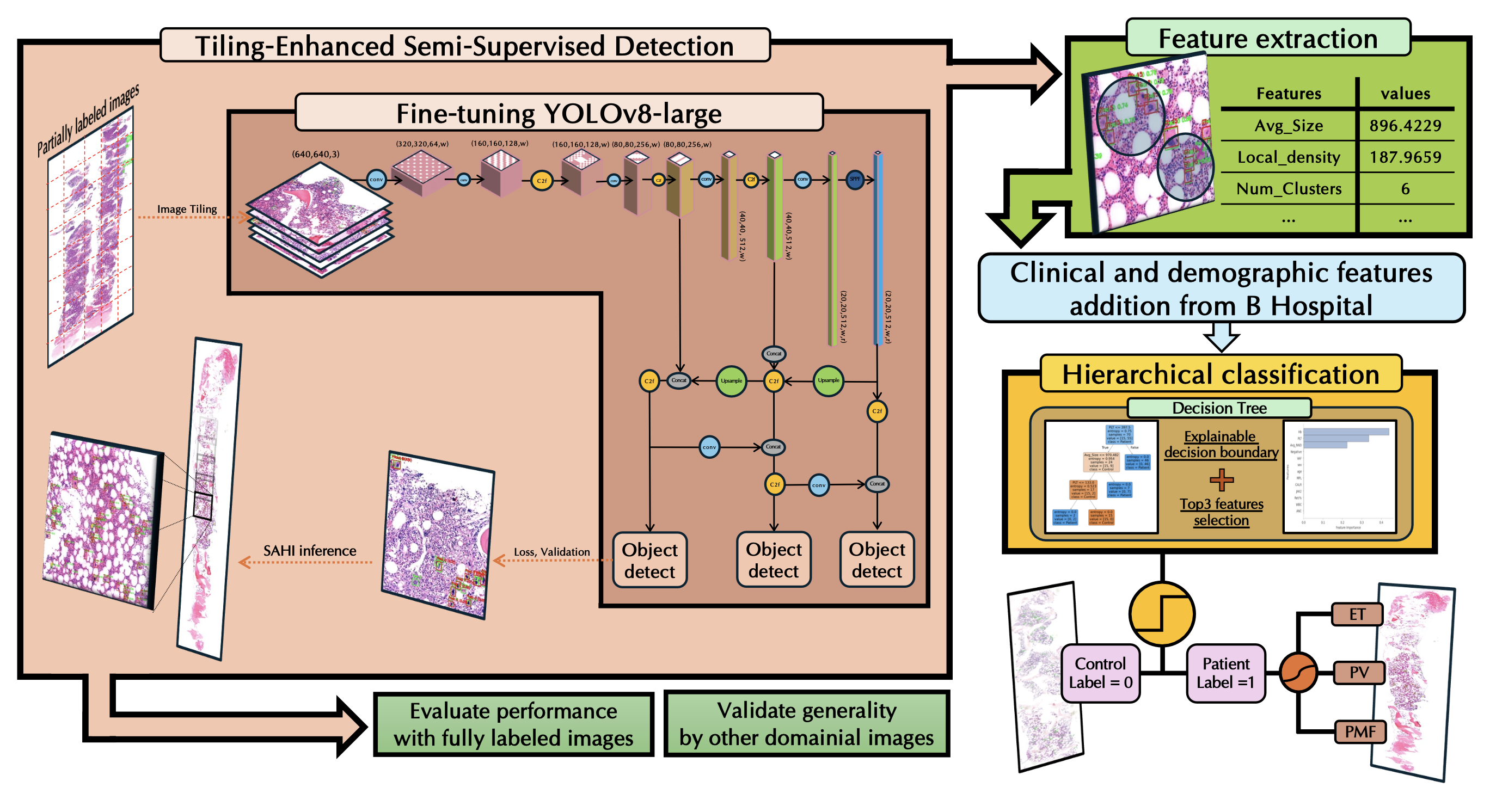

MekaNet (Medical AI)

WSI-based Tiny Object Detection using SSL architecture. Focused on medical image analysis.